Unraveling the Evolution of Neural Networks

The journey of neural networks spans over six decades, embodying a tale of innovation, challenge, and breakthrough. The inaugural model, the Perceptron, created by Frank Rosenblatt in 1958, was a simple yet groundbreaking attempt to mimic human learning processes. Despite its limitations in handling non-linear data, it established a foundational framework for understanding how machines could learn from inputs and make decisions.

Fast forward to the 1980s, when the introduction of the backpropagation algorithm marked a pivotal moment in the field. This algorithm allowed for the training of multi-layer neural networks, enhancing their ability to learn complex patterns and relationships within data. The seminal work by Geoffrey Hinton and others catalyzed further research, leading to the birth of what we now refer to as deep learning. These multi-layer networks, often containing hundreds of layers, opened the door to previously unimaginable capabilities in predictive analytics and feature extraction.

In the following decade, the 2000s witnessed the ascendance of deep learning techniques, which not only improved the performance of neural networks but also broadened their applicability. Applications like image recognition became mainstream, enabling technologies such as facial recognition in social media platforms and object detection in security systems. Similarly, advancements in natural language processing revolutionized interactions with machines, leading to the development of sophisticated virtual assistants like Apple’s Siri and Amazon’s Alexa.

The transformative impact of these advancements is evident across various sectors. In healthcare, neural networks empower diagnostic systems to analyze medical images with extraordinary precision, facilitating early detection of diseases like cancer. Moreover, they aid in personalized medicine by analyzing genomic data and predicting individual responses to treatments.

In the realm of finance, the capabilities of neural networks enable robust fraud detection systems that can analyze vast amounts of transaction data in real time, identifying anomalies that may indicate fraudulent activities. Financial institutions now rely on these systems to safeguard transactions and develop risk assessment models.

Transportation is another sector benefiting profoundly from neural networks. The development of self-driving cars, powered by deep learning algorithms, demonstrates the potential of these technologies to enhance road safety and reduce accidents. Companies like Tesla and Waymo are at the forefront, utilizing intricate neural networks to process sensory inputs and make real-time navigational decisions.

As the field of neural networks continues to evolve, it ignites a flurry of intriguing questions regarding the future. How can we harness these technologies to further enhance our daily lives? What ethical considerations must we address as these systems become more autonomous? These questions spotlight the importance of responsible AI development as we strive to unlock the full potential of intelligent systems and their societal impact.

DISCOVER MORE: Click here to dive deeper

The Birth and Rise of the Perceptron

The origins of neural networks can be traced back to the simple yet revolutionary Perceptron. Designed by Frank Rosenblatt, the Perceptron was one of the first artificial neural networks, consisting of a single layer of neurons that could learn from input data. This early model utilized binary classifiers to separate data into two distinct classes, showcasing the potential of machine learning. However, its inability to tackle problems that weren’t linearly separable—such as the XOR problem—sparked critical debates and limitations in its application. Thus, despite its historical significance, the Perceptron faced challenges that led to a temporary stagnation in neural network research.

The Resurgence: Backpropagation and the Birth of Deep Learning

The turning point for neural networks came in the 1980s with the advent of the backpropagation algorithm. This innovation allowed for the effective training of multi-layer neural networks, or multi-layer perceptrons (MLPs), enabling them to learn intricate patterns in data. Notably, the collaborative work of researchers like Geoffrey Hinton, David Rumelhart, and Ronald Williams propelled deep learning back into the scientific spotlight. Utilizing backpropagation, networks could adjust weights across layers based on the errors of the final output compared to the expected result, leading to improved accuracy in classifications.

By the 1990s, deep learning began gaining traction, with researchers exploring architectures that included the incorporation of multiple hidden layers, which facilitated the modeling of complex functions. The ascendance of GPUs further accelerated this movement, providing the computational power necessary to handle extensive datasets effectively. Consequently, neural networks became more capable of handling tasks such as image and speech recognition, driving significant advancements in fields ranging from computer vision to natural language processing.

Transformative Applications of Modern Neural Networks

The evolution of neural networks extends beyond theoretical advancements; it has led to tangible outcomes across diverse domains. Today, we witness the transformative power of modern architectures, particularly in areas such as:

- Healthcare: Neural networks analyze medical images with remarkable accuracy, offering the potential for early diagnosis in diseases like cancer.

- Finance: Fraud detection systems powered by neural networks can sift through millions of transactions in real-time, identifying potentially harmful patterns.

- Transportation: Self-driving vehicles leverage sophisticated neural networks to interpret sensory data, making instantaneous driving decisions that enhance safety.

- Entertainment: Recommendation systems utilize deep learning algorithms to tailor content suggestions, enriching user experience on platforms like Netflix and Spotify.

These applications illustrate how the evolution from the Perceptron to sophisticated deep learning architectures has not only expanded the capabilities of neural networks but has also profoundly impacted everyday life. As the technology continues to advance, the urgency to address ethical considerations associated with machine learning grows, reinforcing the need for responsible AI development.

The Evolution of Neural Networks: From Perceptron to Modern Architectures

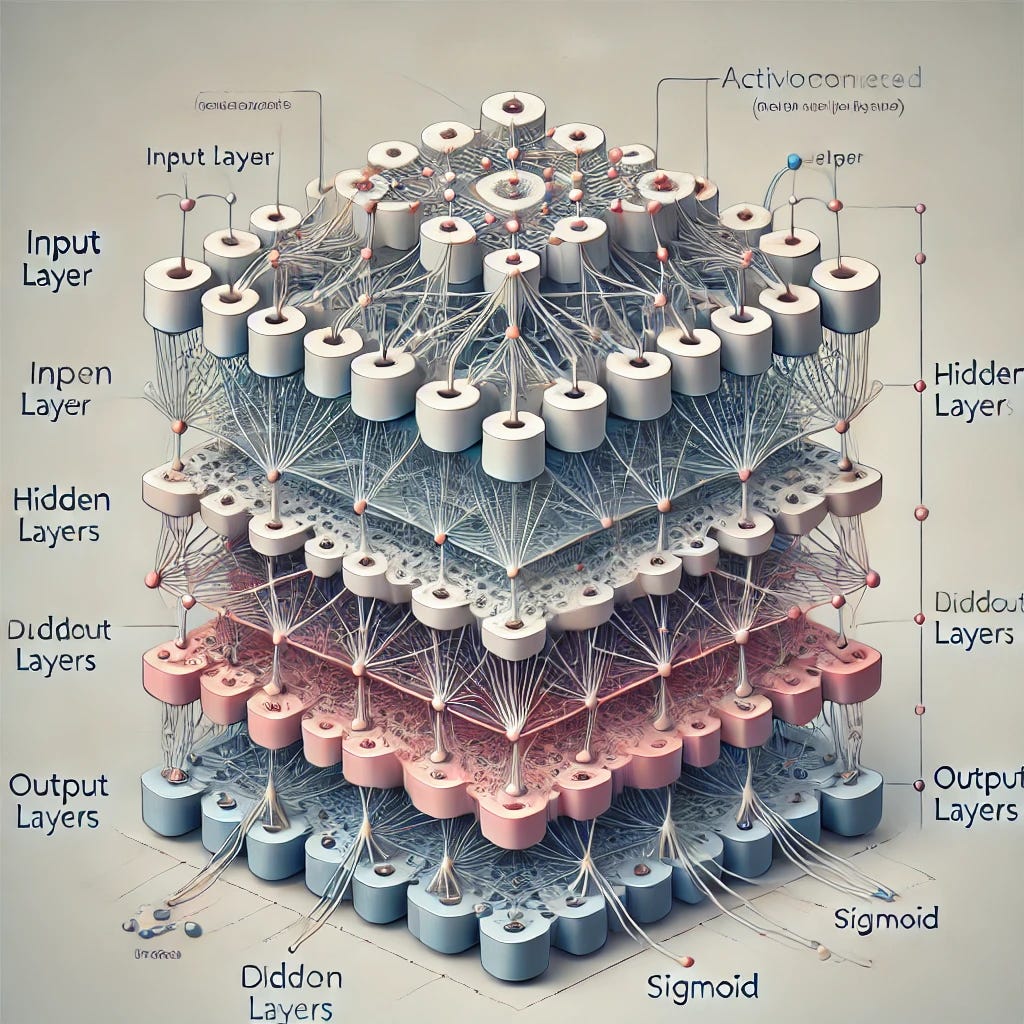

Neural networks have undergone a remarkable transformation since the inception of the Perceptron in the 1950s. Originally conceived by Frank Rosenblatt, the Perceptron was a binary classifier that could organize data into two categories. While its capabilities were limited, it laid the groundwork for more complex models. The limitations of single-layer perceptrons sparked the need for multilayered architectures, giving birth to the concept of multi-layer perceptrons (MLP). MLPs introduced the idea of:

- Hidden layers, enabling networks to learn more intricate patterns.

- Backpropagation algorithms, enhancing the learning process through efficient error correction.

The late 20th century saw the emergence of more advanced neural networks, such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), each designed to tackle specific types of data. CNNs revolutionized image processing by automating feature extraction, making them invaluable for facial recognition and object detection tasks. Meanwhile, RNNs catered to sequential data, making them pivotal in natural language processing and time series analysis.

As technology evolved, so did the architectures. The introduction of Deep Learning in the early 2000s marked a significant advancement, enabling the training of very deep networks with multiple layers. Generative Adversarial Networks (GANs) soon followed, allowing for the creation of new data instances by pairing two networks against each other.

With the growth of computational power and the availability of vast datasets, modern architectures continue to redefine what neural networks can achieve. Innovations such as transformers have revolutionized natural language processing, leading to impressive advances in machine translation and chatbot technology. Consequently, we are witnessing unprecedented breakthroughs in fields ranging from healthcare to autonomous driving as neural networks continue to evolve.

| Category | Advantages |

|---|---|

| Deep Learning | Enables automatic feature extraction and superior performance on complex tasks. |

| Multi-layer Perceptrons | Enhanced learning capabilities through multiple layers, improving accuracy. |

As we delve deeper into these architectures, the future of neural networks appears bright, with ongoing research pushing the boundaries of what is possible in machine learning and artificial intelligence.

DISCOVER MORE: Click here to dive deeper

The Rise of Convolutional and Recurrent Neural Networks

As the field of neural networks progressed, researchers sought methods to better mimic the human brain’s complexities. This aspiration led to the development of specialized architectures such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs). These advanced models showcased stunning capabilities in their respective domains, propelling neural networks toward new heights of performance.

Convolutional Neural Networks revolutionized the way computers process visual data. With the introduction of the AlexNet architecture in 2012, deep learning gained extraordinary visibility through its impressive performance in the ImageNet competition. CNNs utilize convolutional layers, which apply multiple filters to input images to detect spatial hierarchies of features—ranging from edges to complex textures. As a result, CNNs excel in image classification, object detection, and image segmentation, significantly impacting industries such as autonomous vehicles, security surveillance, and augmented reality.

Contrastingly, Recurrent Neural Networks emerged to handle sequential data and temporal dependencies. RNNs possess a unique architecture that allows them to maintain and process information across time steps, making them well-suited for tasks like language modeling, machine translation, and speech recognition. Notably, the introduction of Long Short-Term Memory (LSTM) networks addressed the long-standing issue of vanishing gradients, allowing these networks to capture long-range dependencies in data effectively. In the context of the United States, companies like Google and Amazon utilize these models for enhancing natural language processing systems, powering products such as virtual assistants and automated customer support chatbots.

Generative Models and the Future of Artificial Intelligence

The evolution of neural networks has also paved the way for innovative generative models such as Generative Adversarial Networks (GANs). Proposed by Ian Goodfellow in 2014, GANs consist of two competing neural networks: a generator and a discriminator. The generator creates synthetic data, while the discriminator evaluates whether the data is real or generated. This adversarial process leads to exceptionally realistic outputs, from stunning visual art to deepfakes, capturing both admiration and concern in equal measure. The entertainment industry has seen creative applications of GANs, pushing the boundaries of digital art and animation, thereby prompting discussions on copyright and authenticity.

Another noteworthy advancement is the transformer architecture, championed in the paper “Attention is All You Need” in 2017. Transformers have become foundational for natural language processing, driving innovations in models like BERT and GPT-3. These architectures utilize self-attention mechanisms that allow them to weigh the influence of contextual words dynamically, resulting in enhanced language understanding and generation capabilities. The implications for American businesses, particularly in tech and marketing, are profound, as they enable personalized content and serve as the backbone of sophisticated chatbots and virtual assistants.

As neural networks continue to evolve, the exploration of hybrid models combining the strengths of CNNs, RNNs, GANs, and transformers will likely lead to even more sophisticated architectures. This evolution holds immeasurable potential for automation, creativity, and problem-solving across various sectors, ushering in a new era of artificial intelligence with capabilities we are just beginning to grasp.

DIVE DEEPER: Click here to discover more

Conclusion: A New Era in Artificial Intelligence

The journey of neural networks has transcended the simplistic beginnings of the perceptron to the intricate frameworks that define modern artificial intelligence. As we explored the various architectures—from Convolutional Neural Networks revolutionizing visual recognition to Recurrent Neural Networks mastering sequential data—the versatility and potency of these models have become undeniable. Moreover, the emergence of Generative Adversarial Networks and transformative transformer architectures has reshaped our understanding of both creativity and language processing, forging pathways for compelling applications across diverse industries.

As technology continues to advance, the fusion of these specialized models promises groundbreaking innovations. Companies in the United States are already leveraging these sophisticated neural networks to push boundaries within sectors such as healthcare, finance, and entertainment. However, with these advancements come ethical considerations—issues surrounding data privacy, authenticity, and the potential displacement of jobs are critical discussions that must accompany the rise of artificial intelligence.

Looking ahead, the ongoing evolution of neural networks will likely unlock even more sophisticated capabilities, fueling a future where intelligent machines are integral to our daily lives. Policymakers, researchers, and developers must collaborate to harness this evolution responsibly, ensuring that the benefits of artificial intelligence are widespread and equitable. By embracing both the remarkable achievements and the challenges at hand, we can navigate the future of neural networks and artificial intelligence with purpose and insight.